A Framework Built for Faculty

Three principles that change how AI-assisted work is assessed.

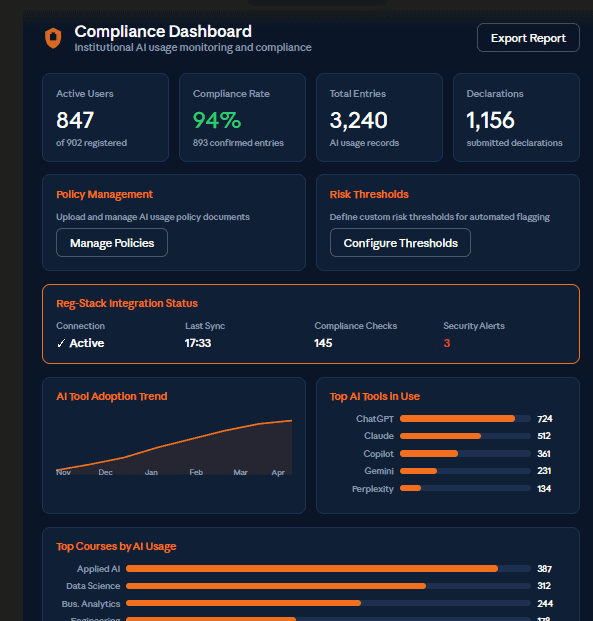

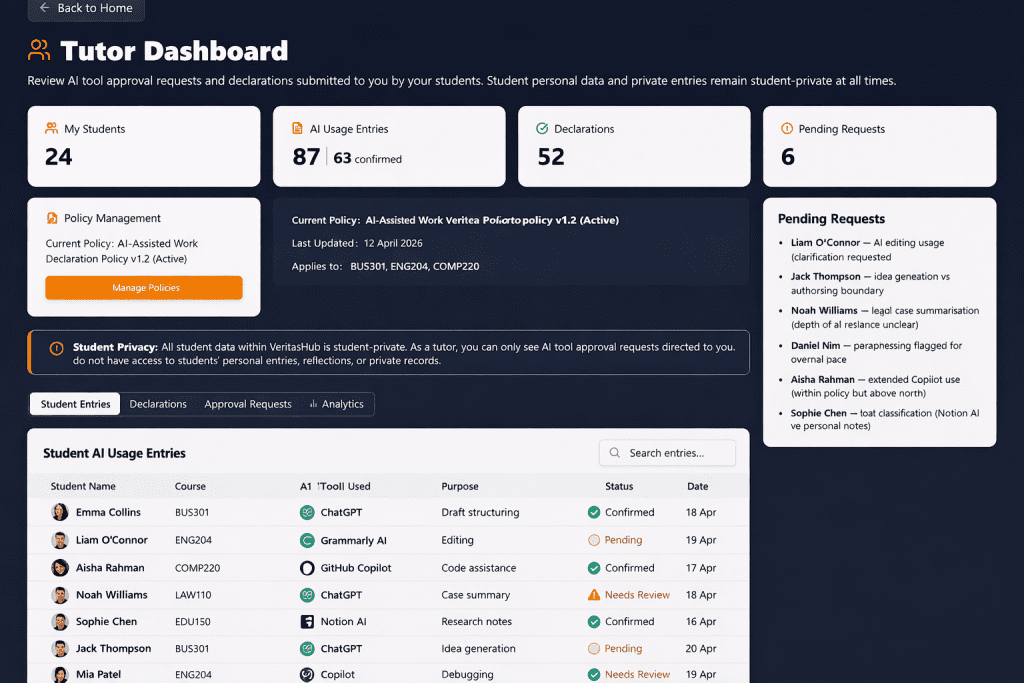

VeritasHub gives you the structured evidence needed to evaluate the thinking behind every AI-assisted submission — not just the output. Assess what matters: how the student engaged, evaluated, and transformed AI assistance into their own academic contribution. Grade with confidence. Appeal with evidence.

Your institutional AI policies are not a PDF in a student handbook. In VeritasHub they are operational — embedded directly into the declaration workflow, applied consistently at the point of submission, and supplemented by leading academic integrity frameworks from peer institutions worldwide. Policy becomes practice. Every time.

Every declaration produces a structured, timestamped, auditable record. When a student submits work you have documented evidence of what AI tools were used, how they were used, and how the student evaluated and transformed the output. Grade appeals become straightforward. Moderation becomes consistent. Judgement becomes defensible.

VeritasHub is currently accepting applications for its inaugural institutional pilot programme. Places are limited to 20 institutions worldwide across three regions — New Zealand & Australia, United Kingdom & Europe, and North America.

VeritasHub doesn’t manage the AI challenge. It transforms it into an academic asset.

The framework is ready. Your students are ready.